|

In these series of articles, we will provide you with a brief dictionary of terms surrounding data science including AI, machine learning, and deep learning. What is Natural Language Processing in Data Science in 2020?Natural Language Processing is a branch of Artificial Intelligence that provides a vehicle for computers to understand, interpret, and manipulate natural human language. Natural Language Processing is composed of elements from a number of fields including computer science and computational linguistics in order to bridge the separation between human communication and computer understanding. The post What is Natural Language Processing in Data Science in 2020? appeared first on Data Science PR. Via https://datasciencepr.com/what-is-natural-language-processing-in-data-science-in-2020/?utm_source=rss&utm_medium=rss&utm_campaign=what-is-natural-language-processing-in-data-science-in-2020 In these series of articles, we will provide you with a brief dictionary of terms surrounding data science including AI, machine learning, and deep learning. So, what is Long Short Term Memory in Data Science in 2020?Recurrent neural networks, of which long short-term memory units are the most powerful and well known subset, are a type of artificial neural network designed to recognize patterns in sequences of data, such as numerical times series data emanating from sensors, stock markets and government agencies, but also including text, genomes, handwriting and the spoken word. A long short-term memory network is a special kind of recurrent neural network which is optimized for learning from and acting upon time-related data which may have undefined or unknown lengths of time between relevant events. Long short-term memory networks work very well on a wide range of problems and are now widely used. They were introduced in 1997 by Hochreiter & Schmidhuber, and were refined and popularized by many subsequent researchers. The post What is Long Short Term Memory in Data Science in 2020? appeared first on Data Science PR. Via https://datasciencepr.com/what-is-long-short-term-memory-in-data-science-in-2020/?utm_source=rss&utm_medium=rss&utm_campaign=what-is-long-short-term-memory-in-data-science-in-2020 In these series of articles, we will provide you with a brief dictionary of terms surrounding data science including AI, machine learning, and deep learning. So, what is Explainable AI in Data Science in 2020?Explainable (interpretable) AI models strive to solve the recognized problem that as we generate newer and more innovative applications for neural networks, the question “How do they work?” becomes more and more important. Opening the black box to enable transparency is becoming more important as we realize that we don’t really know why AI models make the choices they do. As models become more complex, the task of producing an interpretable version of the model becomes more difficult. The post What is Explainable AI in Data Science in 2020? appeared first on Data Science PR. Via https://datasciencepr.com/what-is-explainable-ai-in-data-science-in-2020/?utm_source=rss&utm_medium=rss&utm_campaign=what-is-explainable-ai-in-data-science-in-2020 In these series of articles, we will provide you with a brief dictionary of terms surrounding data science including AI, machine learning, and deep learning. What is Data Storytelling in Data Science?Data storytelling is a methodology for communicating information, tailored to a specific audience, with a compelling narrative.It is the last ten feet of your data analysis and arguably the most important aspect. The rate that businesses collect data today is phenomenal. You can now collect data on every aspect of your business and, in fact, your life. Despite the surgence of solutions, such as BI tools, dashboards, and spreadsheets over the recent decades, businesses still are unable to fully take advantage of the opportunities hidden in their data. The last step of the data science process involves communicating potentially complex machine learning results to project stakeholders who are non-experts with data science. Data storytelling is an important skillset for all data scientists. The post What is Data Storytelling in Data Science in 2020? appeared first on Data Science PR. Via https://datasciencepr.com/what-is-data-storytelling-in-data-science-in-2020/?utm_source=rss&utm_medium=rss&utm_campaign=what-is-data-storytelling-in-data-science-in-2020 A Box and Whisker Plot (or Box Plot) is a convenient way of visually displaying the data distribution through their quartiles.The lines extending parallel from the boxes are known as the “whiskers”, which are used to indicate variability outside the upper and lower quartiles. Outliers are sometimes plotted as individual dots that are in-line with whiskers. Box Plots can be drawn either vertically or horizontally. Although Box Plots may seem primitive in comparison to a Histogram or Density Plot, they have the advantage of taking up less space, which is useful when comparing distributions between many groups or datasets. Here are the types of observations one can make from viewing a Box Plot:

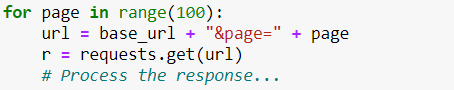

Two of the most commonly used variation of Box Plot are: variable-width Box Plots and notched Box Plots. The post Data Visualization Explained – Box and Whisker Plot appeared first on Data Science PR. Via https://datasciencepr.com/data-visualization-explained-box-and-whisker-plot/?utm_source=rss&utm_medium=rss&utm_campaign=data-visualization-explained-box-and-whisker-plot In these series of articles, we will provide you with a brief dictionary of terms surrounding data science including AI, machine learning, and deep learning. Now, what is a Cost Function in Data Science?In mathematical optimization and decision theory, a loss function or cost function is a function that maps an event or values of one or more variables onto a real number intuitively representing some “cost” associated with the event. An optimization problem seeks to minimize a loss function A cost function represents a value to be minimized, like the sum of squared errors over a training set. Gradient descent is a method for finding the minimum of a function of multiple variables. So you can use gradient descent to minimize your cost function. The post What is a Cost Function in Data Science? appeared first on Data Science PR. Via https://datasciencepr.com/what-is-a-cost-function-in-data-science/?utm_source=rss&utm_medium=rss&utm_campaign=what-is-a-cost-function-in-data-science In these series of articles, we will provide you with a brief dictionary of terms surrounding data science including AI, machine learning, and deep learning. Now, what is Convolutional Neural Network in Data Science?A Convolutional Neural Network is a common method used with deep learning, and is typically associated with computer vision and image recognition. Convolutional Neural Networks employ the mathematical concept of convolution to simulate the neural connectivity lattice of the visual cortex in biological systems. Convolution can be viewed as a sliding window over top a matrix representation of an image. This allows for the simulation of the overlapping tiling of the visual field. The post What is Convolutional Neural Network in Data Science? appeared first on Data Science PR. Via https://datasciencepr.com/convolutional-neural-network/?utm_source=rss&utm_medium=rss&utm_campaign=convolutional-neural-network In these series of articles, we will provide you with a brief dictionary of terms surrounding data science including AI, machine learning, and deep learning. Now, what is Backpropagation in Data Science?Backpropagation is an algorithm for supervised learning of artificial neural networks using gradient descent. Given an artificial neural network and an error function, the method calculates the gradient of the error function with respect to the neural network’s weights. It is a generalization of the delta rule for perceptrons to multilayer feedforward neural networks. The “backwards” part of the name stems from the fact that calculation of the gradient proceeds backwards through the network, with the gradient of the final layer of weights being calculated first and the gradient of the first layer of weights being calculated last. Partial computations of the gradient from one layer are reused in the computation of the gradient for the previous layer. This backwards flow of the error information allows for efficient computation of the gradient at each layer versus the naive approach of calculating the gradient of each layer separately. Backpropagation’s popularity has experienced a recent resurgence given the widespread adoption of deep neural networks for image recognition and speech recognition. It is considered an efficient algorithm, and modern implementations take advantage of specialized GPUs to further improve performance. The post What is Backpropagation in Data Science in 2020? appeared first on Data Science PR. Via https://datasciencepr.com/what-is-backpropagation-in-data-science-in-2020/?utm_source=rss&utm_medium=rss&utm_campaign=what-is-backpropagation-in-data-science-in-2020 Neural network activation functions are a crucial component of deep learning.Activation functions determine the output of a deep learning model, its accuracy, and also the computational efficiency of training a model – which can make or break a large scale neural network. Activation functions also have a major effect on the neural network’s ability to converge and the convergence speed, or in some cases, activation functions might prevent neural networks from converging in the first place. In neural networks, linear and non-linear activation functions produce output decision boundaries by combining the network’s weighted inputs. The ReLU activation function is the most commonly used activation function right now, although the Tanh or hyperbolic tangent, and Sigmoid or logistic activation functions are also used. The post What is an Activation Function in Data Science? appeared first on Data Science PR. Via https://datasciencepr.com/what-is-an-activation-function-in-data-science/?utm_source=rss&utm_medium=rss&utm_campaign=what-is-an-activation-function-in-data-science One of the first places where blockchain technology can make a large impact on the transactional ecosystem within the energy industry is in commodities trading.Companies currently spend millions to build and access proprietary commodity trading platforms that track and execute transactions. Rather than multiple proprietary systems, blockchain technology could be used to ensure security, immediacy and immutability of energy trading. Additionally, there is opportunity in the creation and trading of green certificates and carbon offsets, which are often costly to obtain. Automated smart contracts and metering systems could improve offset accessibility, an approach taken by Veridium Labs project. Eliminating MiddlemenBlockchain transactions are also particularly effective at eliminating middlemen, which may serve to lower costs instituted by energy retailers. As mentioned above, these retailers sell users energy from the utility providers delivering the energy. A more transparent blockchain-based system may allow for users to purchase directly from the utility providers. United States-based startup Grid is utilizing the Ethereum blockchain to do just that, enabling users to purchase electricity wholesale rather than going through retailers. Peer-to-peer transactionsFinally, peer-to-peer transactions — one of crypto’s initial value propositions — is an avenue of improvement for the energy sector. Blockchain systems can allow users to trade energy directly. This is particularly promising for renewable sources of energy like solar and wind, which users can generate themselves. This innovation would essentially allow prosumers to enter into the energy market as a supplier. Australian company Power Ledger is allowing users to do just that with its microgrids, which allow prosumers to sell energy to members in their communities. The post How can blockchain improve transactions in the energy industry? appeared first on Data Science PR. Via https://datasciencepr.com/how-can-blockchain-improve-transactions-in-the-energy-industry/?utm_source=rss&utm_medium=rss&utm_campaign=how-can-blockchain-improve-transactions-in-the-energy-industry Line Graphs are used to display quantitative values over a continuous interval or time period. A Line Graph is most frequently used to show trends and analyse how the data has changed over time.Line Graphs are drawn by first plotting data points on a Cartesian coordinate grid, then connecting a line between all of these points. Typically, the y-axis has a quantitative value, while the x-axis is a timescale or a sequence of intervals. Negative values can be displayed below the x-axis. The direction of the lines on the graph works as a nice metaphor for the data: an upward slope indicates where values have increased and a downward slope indicates where values have decreased. The line’s journey across the graph can create patterns that reveal trends in a dataset. When grouped with other lines, individual lines can be compared to one another. However, avoid using more than 3-4 lines per graph, as this makes the chart more cluttered and harder to read. A solution to this is to divide the chart into smaller multiples. The post What is a Line Graph in Data Visualization? appeared first on Data Science PR. Via https://datasciencepr.com/line-graph/?utm_source=rss&utm_medium=rss&utm_campaign=line-graph First, let’s consider the matter from an ethical point of view. Your program should be respectful to the site owner. Remember that every time you load a web page, you’re making a request to a server. When you’re just a human with a browser, there’s not much damage you can do. With a Python script, however, you can execute thousands of requests a second, intentionally or unintentionally. The server then needs to process every request individually. This, combined with the normal user traffic, can result in overloading the server. And this overload can manifest in slowing down the website or even bringing it down altogether. Such a situation usually degrades the experience of real users and can cost the website owner valuable customers.Obviously, we don’t want that. In fact, if done intentionally, this is considered a crime – the so-called DDOS attack (Deliberate Denial of Service), so we better avoid it. Given the potential damage this easy technique can do, servers have started employing automatic defense mechanisms against it.One form of such protection against spammers may be to temporarily block a user from the service if they detect a big amount of activity in a short period of time. So, even if you are not sending huge numbers of requests, you may get blocked as a preventive measure. And that’s precisely why it is important to know how to limit your rate of requests. How to Limit Your Rate of Requests When Scraping?Let’s see how to do this in Python. It is actually very easy. Suppose you have a setup with a “for loop” in which you make a request every iteration, like this:

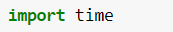

Depending on the other actions you take in the loop, this can iterate extremely fast. So, in order to make it slower, we will simply tell Python to wait a certain amount of time. To achieve this, we are going to use the time library.

It has a function, called sleep that “sleeps” the program for the specified number of seconds. So, if we want to have at least 1 second between each request, we can have the sleep function in the for loop, like this:

This way, before making a request, Python would always wait 1 second. That’s how we will avoid getting blocked and proceed with scraping the webpage. Source: 365 Data Science Blog The post How to Limit Your Rate of Requests When Web Scraping in 2020? appeared first on Data Science PR. Via https://datasciencepr.com/how-to-limit-your-rate-of-requests-when-web-scraping-in-2020/?utm_source=rss&utm_medium=rss&utm_campaign=how-to-limit-your-rate-of-requests-when-web-scraping-in-2020 Used to display the general levels of supply and demand of a particular asset by visualising the price actions through a series of line patterns. Kagi Charts are time-independent and help filter out the noise that can occur on other financial charts.This is so that important price movements are displayed more clearly. Recognising the patterns that occur in Kagi Charts is key to understanding them. While Kagi Charts do display dates or time on their x-axis, these are in fact markers for the key price action dates and are not part of a timescale. The y-axis on the right-hand side is used as the value scale. The line in a Kagi Chart initially moves vertically in the same direction of the price movement and will continue to extend, so long as the price, regardless of how small, maintains the same direction. Once the price hits a pre-determined “reversal” amount, the line makes a u-turn and goes in the opposite direction. So, each of the little horizontal lines on the chart indicates where a price reversal has taken place. When a horizontal line joins a rising line with a plunging line it’s known as a “shoulder”, while a horizontal line connecting a plunging line with a rising line is known as a “waist”. The varying thickness or colour of the line is dependent on the price behaviour. When the price goes higher than a previous “shoulder” reversal, the line becomes thicker (and/or green) and is known as a “Yang line”. This can be interpreted as an increase in demand over supply for the asset and as a bullish upward trend. Alternatively, when the price breaks below a previous “waist” reversal, the line becomes thinner (and/or red) and is known as a “Yin line”. This signifies an increase in supply over demand for the asset and as a bearish downward price trend. Traders use the shift from thin (Yin) to thick (Yang) lines (and vice versa) as signals to buy or sell an asset. A Yin to Yang shift indicates to buy, while a Yang to Yin shift indicates to sell. The post What is Kagi Chart in Data Visualization? appeared first on Data Science PR. Via https://datasciencepr.com/kagi-chart/?utm_source=rss&utm_medium=rss&utm_campaign=kagi-chart Many people agree that big data is here to stay and not a mere fad. Something that is not so clear-cut to everyday individuals concerns the future trends of big data analytics. These technologies are quickly evolving. What does that mean for the businesses that use them now or will soon?Understanding what’s ahead for big data technologies and use cases is more straightforward if people tune in to what experts have to say. Here are some glimpses into what’s possible, based on their perceptions. 1. A Sharper Focus on Data GovernanceAs companies continue collecting progressively large quantities of big data, the risk goes up that they may misuse it. That’s why many experts expect a renewed emphasis on data governance. Peter Ballis, CEO of Sisu Data, said, “Beyond 2020, governance comes back to the forefront.” He also shared his beliefs about the role of data governance: “As platforms for analysis and diagnosis expand, derived facts from data will be shared more seamlessly within a business as data governance tools will help ensure the confidentiality, proper use and integrity of data improve to the point they fade into the background again.” 2. Augmented Analytics Will Speed Decision-MakingAnalysts at Gartner see augmented analytics shaping big data future trends. It involves applying technologies like artificial intelligence (AI), machine learning and natural language processing to big data platforms. This helps organizations reach decisions faster and recognize trends more efficiently. Rita Sallam, vice president and analyst at Gartner, suggested what may be on the horizon: “[This trend is] really about democratizing analytics … It is really about getting insight in a fraction of the time with less skill than is possible today.” 3. Big Data Will Supplement — Not Replace — Researchers’ WorkMany of today’s big data platforms are so advanced that it’s understandable if people start expecting a time not long from now when it replaces hardworking humans. Dr. Aidan Slingsby, a senior lecturer and director of a data science degree program at City University of London, doesn’t see that outcome as likely — especially for applications such as using big data to assist with market research. “Data science helps identify correlations. So data scientists can provide patterns, networks, dependencies that may not have been otherwise known. But, for data science to really add an extra layer of value, it needs a market researcher who understands the context of the information to interpret the ‘what’ from the ‘why’.” Ben Page, chief executive of Ipsos MORI, echoed that sentiment when he said, “Market research is effectively about the understanding of human beings and their behavior and motivations. It is a realm that data science cannot penetrate on its own.” As a case in point, Ipsos MORI has more than 1,000 data scientists within its global team, but the company employs other professionals, including ethnographers and behavioral scientists, too. 4. Cloud Data Will Shape Customer ExperiencesCloud computing is a major topic of discussion when people weigh in about big data trends. Here are a few things those in the know expect to see regarding what’s happening now and what could occur soon when users combine big data with cloud computing. Nick Piette, director of product marketing and API services at Talend, believes that one of the future trends of big data analytics relates to using the information to enhance customer experiences. He also thinks that having a cloud-first mindset will help. “More and more brand interactions are happening through digital services, so it’s paramount that companies find ways to improve updates and deliver new products and services faster than they ever have before,” he said. How does the cloud fit into that? “With speed in mind, companies will be led to adopt a modern cloud-native mindset that promotes containerized deployments using modern microservices architectures that are developed and managed using the latest DevOps methodologies,” Piette predicted 5. The Increasing Coexistence of Public and Private CloudsMany of today’s companies are already working in the cloud, making a shift to it or strongly considering doing it soon. Jeff Clarke, chief operating officer at Dell Technologies, believes that 2020 will be the first year for businesses to realize they can have both public and private clouds without choosing one or the other. He noted: “The idea that public and private clouds can and will coexist becomes a clear reality in 2020. Multicloud IT strategies supported by hybrid cloud architectures will play a key role in ensuring organizations have better data management and visibility while also ensuring that their data remains accessible and secure.” Clarke also looks forward to a future where private clouds do not only exist within data centers. “As 5G and edge deployments continue to roll out, private hybrid clouds will exist at the edge to ensure the real-time visibility and management of data everywhere it lives. That means organizations will expect more of their cloud and service providers to ensure they can support their hybrid cloud demands across all environments.” 6. Cloud Technology Will Make Big Data More AccessibleOne of the main benefits of cloud computing is that it allows people to access apps from anywhere. Andy Monfried, CEO and founder of Lotame, sees a time when most people in the workforce will know how to work with self-service big data apps. He explained: “In 20 years, big data analytics will likely be so pervasive throughout business that it will no longer be the domain of specialists. Every manager, and many nonmanagerial employees, will be assumed to be competent in working with big data, just as most knowledge workers today are assumed to know spreadsheets and PowerPoint. Analysis of large datasets will be a prerequisite to almost every business decision, much as a simple cost/benefit analysis is today.” He then tied that prediction to big data technologies that work in the cloud. “This doesn’t mean, however, that everyone will have to become a data scientist. Self-service tools will make big data analysis broadly accessible. Managers will use simplified, spreadsheet-like interfaces to tap into the computing power of the cloud and run advanced analytics from any device.” Source: SmartDataCollective The post 6 Important Big Data Future Trends appeared first on Data Science PR. Via https://datasciencepr.com/6-important-big-data-future-trends/?utm_source=rss&utm_medium=rss&utm_campaign=6-important-big-data-future-trends Bitcoin transactions could be tracked through block explorers and dedicated services offered by some cryptocurrency exchanges.Unlike banks, where it can be difficult to find out information about details about transactions that are currently being processed — as well as those that have been completed — the blockchain offers far greater levels of transparency. Anyone can search for information based on particular Bitcoin addresses, block numbers and transaction hashes. This, when coupled with wallet explorers, means it becomes possible to draw connections between addresses and the wallets being used to hold Bitcoin. Of course, this will prove exceptionally useful if you’re worried about whether your crypto has gone to the right destination — or are wondering whether the transaction has been verified. But it’s worth remembering that these tools are also practical for law enforcement agencies that are attempting to clamp down on BTC being used for illegal means. The post How can I track Bitcoin transactions in 2020? appeared first on Data Science PR. Via https://datasciencepr.com/bitcoin-transactions/?utm_source=rss&utm_medium=rss&utm_campaign=bitcoin-transactions Bar Chat is also known as Bar Graph or Column Graph.The classic Bar Chart uses either horizontal or vertical bars (column chart) to show discrete, numerical comparisons across categories. One axis of the chart shows the specific categories being compared and the other axis represents a discrete value scale. Bars Charts are distinguished from Histograms, as they do not display continuous developments over an interval. Bar Chart’s discrete data is categorical data and therefore answers the question of “how many?” in each category. One major flaw with Bar Charts is that labelling becomes problematic when there are a large number of bars. The post What is a Bar Chart in Data Visualization? appeared first on Data Science PR. Via https://datasciencepr.com/what-is-a-bar-chart-in-data-visualization/?utm_source=rss&utm_medium=rss&utm_campaign=what-is-a-bar-chart-in-data-visualization Area Graphs are Line Graphs but with the area below the line filled in with a certain colour or texture. Area Graphs are drawn by first plotting data points on a Cartesian coordinate grid, joining a line between the points and finally filling in the space below the completed line. Like Line Graphs, Area Graphs are used to display the development of quantitative values over an interval or time period. They are most commonly used to show trends, rather than convey specific values. Two popular variations of Area Graphs are: grouped and Stacked Area Graphs. Grouped Area Graphs start from the same zero axis, while Stacked Area Graphs have each data series start from the point left by the previous data series. The post Area Graph in Data Visualization appeared first on Data Science PR. Via https://datasciencepr.com/area-graph-in-data-visualization/?utm_source=rss&utm_medium=rss&utm_campaign=area-graph-in-data-visualization KEY POINTS

Amazon, Apple, Facebook and Microsoft closed at new all-time highs Tuesday, a day when the Nasdaq Composite Index hit a new record. Big Tech stocks have fared better than most during the coronavirus pandemic as newly remote workers have had come to rely more than ever on online services. Thanks to their large market caps, they’ve also helped buoy the stock market, which has staged a comeback despite huge unemployment numbers sparked by widespread stay-at-home orders. Facebook, Apple and Amazon all rose more than 3% Tuesday while Microsoft popped about 0.8%. The tech-heavy Nasdaq Composite rose 0.3%, briefly rising above 10,000 for the first time. The S&P 500 was down about 0.8%. The combined market value of the four companies is now close to $5 trillion, with Apple claiming the top spot at nearly $1.5 trillion. Facebook is the only one of the group with a market cap below $1 trillion. Google parent-company is Alphabet the only one of the five largest tech stocks not to close at an all-time high. It’s still about 5% behind its all-time high of $1,524.87, where it closed on Feb. 19. The post Amazon, Apple, Facebook and Microsoft close at all-time highs as Big Tech rallies back from coronavirus appeared first on Data Science PR. Via https://datasciencepr.com/amazon-apple-facebook-and-microsoft-close-at-all-time-highs-as-big-tech-rallies-back-from-coronavirus/?utm_source=rss&utm_medium=rss&utm_campaign=amazon-apple-facebook-and-microsoft-close-at-all-time-highs-as-big-tech-rallies-back-from-coronavirus Succeeding in retail isn’t easy. Shifting customer tastes, shrinkage risks and reduced traffic at physical stores are just some of the challenges retailers may face as they try to operate profitably. Many are relying on data science and business intelligence (BI) tools to get ahead of competitors and stay resilient in a challenging marketplace.Here are five trends in business intelligence and data science for retail worth knowing: 1. Artificial Intelligence-Powered Video AnalyticsArtificial intelligence (AI) can spot patterns and trends humans may miss without high-tech help. Most retail locations already have security cameras installed to safeguard against shoplifting, and some combine those with AI. Doing that gives the stores new insights that drive improved performance. For example, some cameras used in grocery stores have object-recognition capabilities that tell sales floor team members about stock shortages. AI can also determine some broad characteristics, such as customer gender and approximate ages. Such a system might reveal that females under 40 are more likely to select items from a shelf containing organic snacks, but men in the same age group usually ignore those products. Many retailers use AI video analytics because they find it suits a variety of use cases. It could help with checkout management by assessing when the number of people waiting in a queue gets too high. If a retailer installs an interactive display with an AI-equipped camera, the collected data could show how many shoppers stop to look at the promotion or how long they linger. Moreover, AI could act as a problem solver. If it detects people’s average height, the retailer may adjust a display for better accessibility. The technology could also show which areas of a store have the most shoppers, triggering managers to send more employees to those departments. 2. Using Data to Offer Personal Styling Suggestions to ShoppersVirtually everyone has firsthand experience with spending a day shopping for clothes and finishing it empty-handed. Many people have well-formed ideas about the kinds of attire they want. The main problem is that they don’t know which retailers will have it, so they spend hours engaged in a useless search. That’s likely why services like Stitch Fix — which sends clothes to people’s doorsteps based on their style preferences — have become so popular. There are currently more than 100 data scientists on the company’s team who provide information to support stylists and the business overall. Algorithms understand things like a person’s size and budget, while the style experts use their judgment to select wardrobe pieces. Stitch Fix’s inventory consists of more than 1,000 brands, including the company’s private labels. It’s one of the leading enterprises using data to find customers the styles they love, but it’s not the only option. Mada is a newer company on the market taking a similar approach, although it seems a little less robust. Users start by filling out a style quiz. After submitting it, they get tailored recommendations through a swipe-friendly interface. Mada currently has more than 2,600 brand partners. It’s easy to envision how other retailers offering apparel could do this, too. For example, people might get emailed suggestions for new products to buy based on their past purchases. When retail brands successfully present options to consumers that they genuinely want, the sales potential goes up. 3. Relying on Location-Based Business Intelligence Before Opening Physical Stores or Renewing LeasesWith some physical stores receiving less traffic than they once did, it’s especially important for commercial investors to carefully weigh the pros and cons of a potential new location. Many of them are increasingly dependent on data analytics to make investment and leasing decisions. Statistics about population, traffic or tourism could give an investor useful business intelligence to guide a decision about whether to open a new store in a location that made it onto a shortlist. Then, digging into data about the number of visitors could help a brand determine whether or not to renew a lease. This kind of information can also provide glimpses into customers’ needs. For example, if a high percentage of people leave a shopping center and immediately head across the street to a dollar store, that trend could show investors how discount chains remain relevant. Perhaps business intelligence analytics indicate average sales in a mall food court are down. However, lots of shoppers ultimately go to an adjacent Italian restaurant outside the mall. That could mean people are not satisfied with the mall’s food options. When looking for trends like that, investors must be careful not to put too much emphasis on one cause-and-effect link. Could something else — like a lack of seating — discourage people from the food court? If so, the fix might be as straightforward as adding more tables and chairs. In any case, location-based intelligence could help retail property investors avoid costly mistakes. 4. Data WrappingAnother of the trends in business intelligence and data science for retail involves something called data wrapping. It’s a method of bundling information with a brand’s products or services. Data wrapping commonly happens when financial brands offer budgeting apps or show breakdowns of how someone’s credit score changed from month to month. More retail brands will likely start using it, too, though. For example, a book retailer might offer an app that lets people record each title read by scanning a store-specific code. The application would then break down the totals and show the person how many pages they read per year or the average amount of time spent reading. Data wrapping can improve customer satisfaction, plus increase purchase potential. The bookstore could give someone a $10 coupon once they read 10,000 pages, for example. Retailers also win out because they get statistics about the authors, titles or genres its customers like the most. 5. Dynamic PricingWhen Quantzig published its top four retail analytics trends of 2020, dynamic pricing was on the list. It allows retailers to change prices in the moment based on data about customer demands. Amazon led the way with dynamic pricing, implementing the practice several years ago. It now varies them more than 2 million times per day based on customer intent and desire. Other retailers like Target and Walmart do it, too. If the goal is to compete with Amazon, though, many will likely lose the battle. The better approach is to use dynamic pricing to achieve a specific aim, such as to boost in-store traffic or increase the likelihood of a certain consumer group purchasing items. Dynamic pricing must occur with care, however. Brands that try it haphazardly or offer different prices for online purchases versus those made in person risk losing their loyal customers. Source: SmartDataCollective The post Top 5 Trends In Business Intelligence And Data Science For Retail in 2020 appeared first on Data Science PR. Via https://datasciencepr.com/top-5-trends-in-business-intelligence-and-data-science-for-retail-in-2020/?utm_source=rss&utm_medium=rss&utm_campaign=top-5-trends-in-business-intelligence-and-data-science-for-retail-in-2020 It’s a well-known fact that in data science, people spend most of their time cleaning or preprocessing data. Sometimes as much as 70% of the project’s time may go to reorganizing your data before you can start doing some predictive analytics with it! Therefore, knowing how to combine your data to prepare it for analysis becomes one of the key abilities to have under your data scientist belt. That said, it’s super important to be in control of using different joins. Yes, there are other tools and terms, such as appending, merging, and concatenating data. However, joins are conceptually key to grasp. And once you understand join types and learn when and how to apply them well, everything else will fall into place. That’s why in this article, we’ll go through the two most fundamental types of joins:

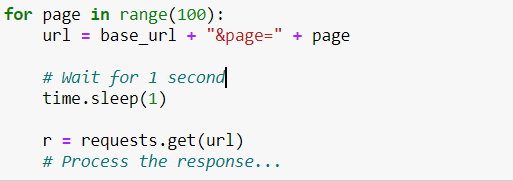

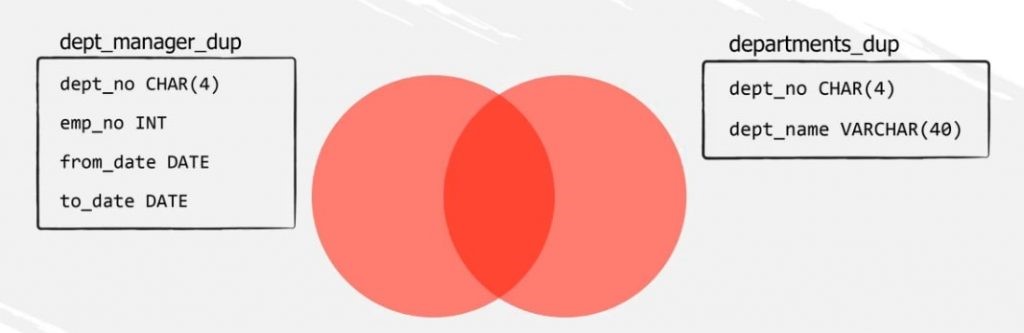

How to Apply JOINs – through JOIN Statements?Joins can be applied with multiple software tools for data science, such as SQL or Python. With R, you need to use a substitute (‘merge’) to create a join, but you can still arrive at an output that conceptually corresponds to a join. So, we’ve presented joins as a concept for data interaction. But how are joins technically applied? By using join statements. To illustrate this point, we’ll show you how to create an INNER, LEFT, and RIGHT JOIN in MySQL. Why SQL? Because it’s one of the most popular query languages that allow you to work with relational database management systems (RDBMS). How Does Relational Algebra Help Us Understand JOINs?Bringing a relational algebra tool into the picture will make your life much easier when you need to use joins! The algebraic tool in question is the Venn diagram, which shows the logical relation between a finite collection of several sets. In SQL, relational algebra translates into relational schemas – the RDBMS tool that will help your strategy for linking tables. Also, don’t forget that in the case of relational schemas, the sets of the Venn diagram are in fact separate data tables that act as our data sources. So, let’s see how we can create a join in MySQL. How to set up the database?We’ll use the “employees” database which you can download here. Let’s focus on the “employees” and the “dept_emp” tables. How do JOINs and relational schemas work?

Technically, SQL JOINs show result sets containing fields derived from two or more tables. Therefore, we can use a join to relate the “employees” and the “dept_emp” table. Then, we can extract information for a certain group of individuals from the “employees” table like employee number, first and last name. And, from the “dept_emp” table we can extract department number and start date of the labor contract. But how can we actually relate the two tables? By designating a common key, also known as a common field, matching column or related column. It’s a column you can find in both the “employees” and “dept_emp” tables. In our example, this is the employee number column, “emp_no”. One thing to keep in mind – when joining our data sources, it is crucial to correctly specify the matching columns on which we are going to combine our data! How does the INNER JOIN work?Let’s refer to the following Venn diagram.

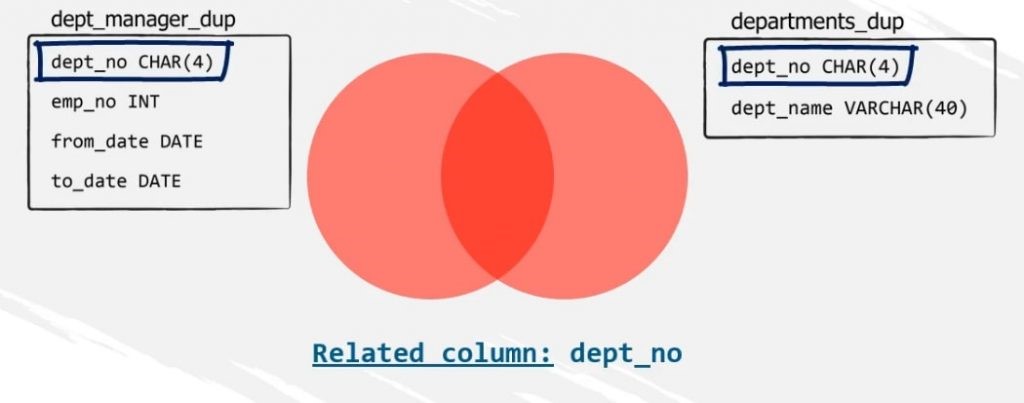

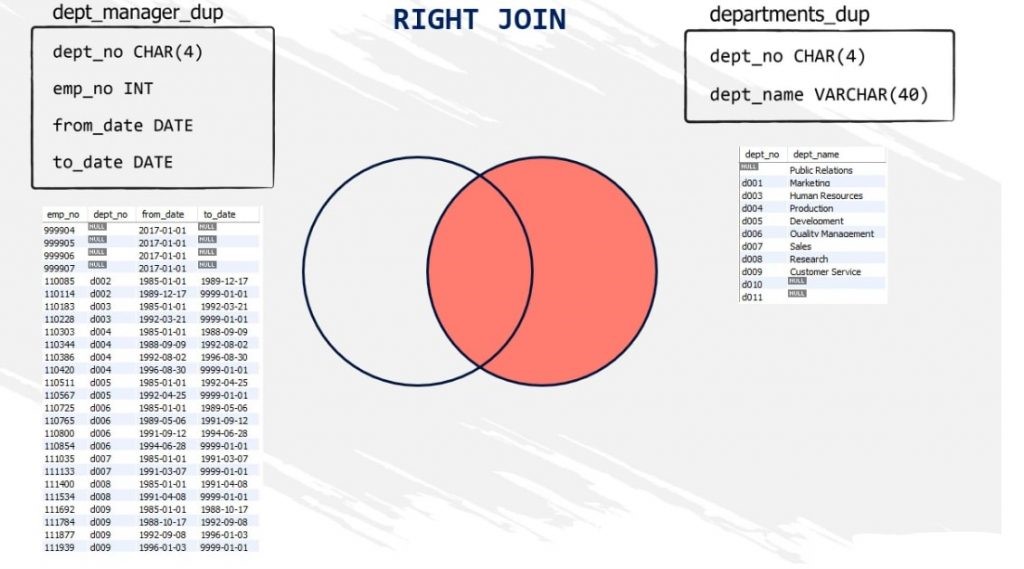

The “dept_manager_dup” table is represented as the set on the left, while the “departments_dup” table is represented by the set, or the circle, on the right. So, which will be the related column here? We know that we can join tables with columns of the same type and meaning. Therefore, the related column of these two tables will be the “dept_no”.

The shared area between the two circles, filled with red, represents all records that belong to both the “dept_manager_dup” and the “departments_dup” tables. We also call this area a result set.

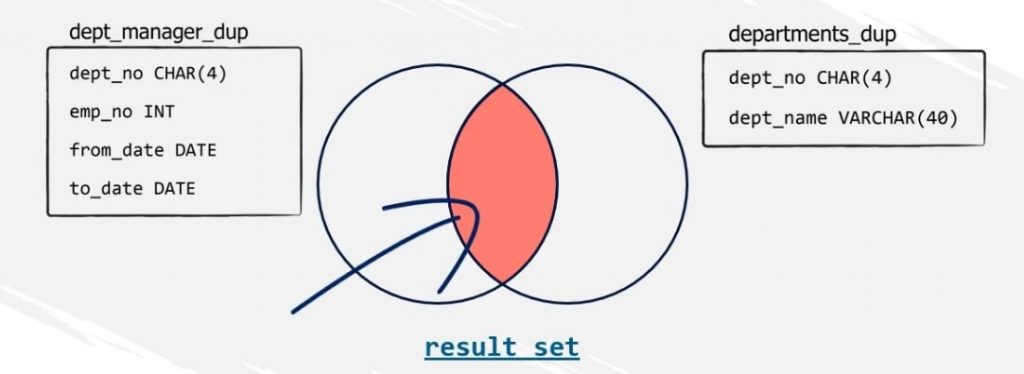

And the INNER JOIN in SQL can help us extract this result set. By the way, the values that do not match will not appear in our final output. Logically, they are called non-matching values, or non-matching records. The SQL statement we need to obtain the desired result set is a SELECT statement.

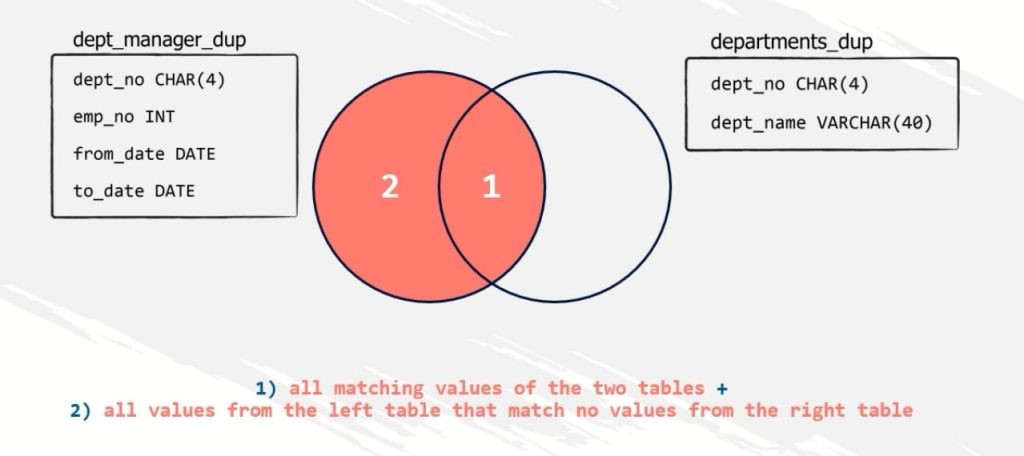

Note that with the INNER JOIN, only non-null matching values are in play! Null values will not be matched, because it would make no sense to do so! What if such matching values did not exist? Simply, the result set will be empty. There will be no link between the two tables. To sum things up for the INNER JOIN, the query above will lead to a new set combining all records from both “dept_manager_dup” and “departments_dup” tables where all values of the matching columns are identical. How Does the LEFT JOIN Work?Similar to INNER JOINs, a SELECT statement with a LEFT JOIN clause will return all records of the table designated to be on the left side of the join, as well as the records from the common field for the table designated to be on the right side of the join. However, in case there are no records from the right table to match with records from the left table on the specific common field, a null value will be displayed! Here’s how it looks.

Compared to the INNER JOIN, the result set, colored in red, will include not only the common area but also the rest of the area of the left table. Here’s the SELECT statement that will deliver this output to us.

There are two important takeaways about the LEFT JOIN. First, it doesn’t include the inner part of the combination of the two sets of data only. So, it can also be called LEFT OUTER JOIN. Second, the order of the tables matters! Whether you’ll set “dept_manager_dup to be on the left and “departments_dup” to be on the right or vice-versa can lead to quite different results! So, paying attention to this when executing your LEFT JOIN will guarantee that you will obtain the result you’re aiming for!

How does the RIGHT JOIN work?The functionality of a RIGHT JOIN, or RIGHT OUTER JOIN, is inverse to that of a LEFT JOIN. A RIGHT JOIN will return all records of the table designated to be on the right side of the join, as well as the records from the common field for the table designated to be on the left side of the join. Here’s an example.

Now let’s discuss the workaround we promised at the beginning of the article. Is it possible to obtain the same output if we execute a right-join query or a left-join query with inverted tables? Definitely yes! And, that’s exactly what you need to do in case you’re using a software tool that doesn’t support RIGHT JOINs! Therefore, these two queries will lead to the same result set!

and

Some Final Words on JOINs…If you want to dig deeper into the field of data science, it’s essential that you understand INNER, LEFT, and RIGHT JOINs well. In fact, all other types of joins, such as FULL OUTER or CROSS JOINs, for instance, originate from here. And that makes the knowledge of how joins work even more fundamental. You can find more details about the INNER, LEFT, RIGHT, and other join types in the SQL tutorials in our blog, so make sure you read them, too. Source: 365 Data Science Blog The post JOINs – What Are They and Why Are They So Relevant for Data Science? appeared first on Data Science PR. Via https://datasciencepr.com/joins-what-are-they-and-why-are-they-so-relevant-for-data-science/?utm_source=rss&utm_medium=rss&utm_campaign=joins-what-are-they-and-why-are-they-so-relevant-for-data-science For many observing and participating in the crypto industry, conversations that include “blockchain” and “energy” often revolve around the resources required to mine proof-of-work blockchains like Bitcoin and their environmental impact.In many ways, this is for good reason: Much of Bitcoin’s mining is done using cheap, coal-powered electricity in China. While initiatives like Soluna’s wind-powered mining farm provide a more sustainable path forward, energy consumption and waste remains a challenge for the proof-of-work crypto community — but this is only one of the ways that the blockchain and energy industries intersect. For the past several years, there has been speculation into blockchain technology’s ability to actually make the energy sector more efficient. The energy sector today is a highly transactional and complex system with multiple sources, suppliers, distributors and middlemen, and several crypto startups have emerged to streamline existing processes and create new functionality. Areas of opportunity include commodities trading, peer-to-peer energy trading, elimination of middlemen retailers, data management, accounting and automation. The post How do the blockchain and energy industries intersect in 2020? appeared first on Data Science PR. Via https://datasciencepr.com/how-do-the-blockchain-and-energy-industries-intersect-in-2020/?utm_source=rss&utm_medium=rss&utm_campaign=how-do-the-blockchain-and-energy-industries-intersect-in-2020 Ex-Google Tech Lead teaches you how to learn Python Programming in this tutorial. You will learn the fundamentals of how to learn Python, server backends and frameworks, databases, frontend, pet projects, and examining what is involved in learning how to set up a Python project that can help you land a job in tech! The post How to Learn Python Tutorial – Easy & simple in 2020 appeared first on Data Science PR. Via https://datasciencepr.com/how-to-learn-python-tutorial-easy-simple-in-2020/?utm_source=rss&utm_medium=rss&utm_campaign=how-to-learn-python-tutorial-easy-simple-in-2020 How Goodyear is transforming from a manufacturing company to a mobility-led technology company6/11/2020 In this conversation, Bernard Marr will be speaking with Chris Helsel, SVP and Chief Technology Officer at Goodyear. They will be discussing how the company is using innovative technologies to stay ahead of the competition. The post How Goodyear is transforming from a manufacturing company to a mobility-led, technology company appeared first on Data Science PR. Via https://datasciencepr.com/how-goodyear-is-transforming-from-a-manufacturing-company-to-a-mobility-led-technology-company/?utm_source=rss&utm_medium=rss&utm_campaign=how-goodyear-is-transforming-from-a-manufacturing-company-to-a-mobility-led-technology-company When you format a data science cover letter, there are 6 keys to success:

Why is the format of a data science cover letter important?The format of your data science cover letter is critical to making a positive first impression. A clean and polished format keeps the focus on the content and conveys attention to detail. Conversely, a sloppy layout signals a lack of professionalism and can instantly eliminate you from the race for your dream job. So, how to format a data science cover letter for the win?Let’s go through them together. Match your data science resume styleUsing the same style in both your cover letter and your data science resume will give your job application a cohesive and elegant look. Also, make sure you align both with the image of the employer. A conservative company won’t appreciate ornate fonts and extravagant design. Keep in mind that your cover letter is also a business letter; and, above all, a powerful tool to get a data science interview invitation. So, its style should reflect that. Choose a crisp fontWith so many font styles out there, choosing the best one could be a challenge. Our advice is: Keep it simple and clean. Yes, when you format a data science cover letter, legibility is a top priority. Flashy custom fonts and special characters are not only distracting, but they’re also hard to read, both for Applicant Tracking Systems (ATS) and humans. There’s nothing wrong with classics like Calibri, Verdana, and Cambria (among many others). Another way to ensure your data science cover letter is readable and scanner-friendly is to select the right font size. Stay on the safe side and go for 10-12 pt. That’s how you’ll tick two boxes: easy-to-read and easy-on-the-eyes. Cut down the lengthLess is more. A single one-sided page with 250-400 words is completely adequate for an efficient cover letter. Resist the temptation and leave out any details that the hiring manager can find on your resume. Of course, you can be brave and experiment with a super-concise cover letter of 150 words. But while this one is sure to be read, you run the risk of omitting some important information. Remember that spacing is important, tooWhen it comes to spacing, consistency is key. To achieve a coherent look, opt for single-line spacing after each section of your data science cover letter (contact information, greeting, introduction, body, closing, and sign-off). Remember, packing your cover letter with quality content doesn’t equal typing one giant wall of text. That would make it look cramped and messy. On the other hand, spacing and shorter paragraphs balance the page and let your document breathe. Plus, they make the content much easier to process. It’s okay to adjust the margins… But keep the alignment to the leftBut don’t go lower than ¾ or ½ inch… And only if you really need the extra space. When you format a data science cover letter, you need to make sure it doesn’t end up looking cluttered and squished. In other words, it’s best to stick to the business letter format rules and set 1-inch margins on all sides. That leaves plenty of margin space for printing and creates an elegant layout. Speaking of best practices, always left-align your cover letter content. No justification and indentation needed, as they go against the standards. Save as… the appropriate file typeSaving your data science cover letter in the right file format is vital. To make sure the reader can open and view it, rely on text type formats, such as .doc or pdf. Both are widely accepted and usually cause no compatibility issues. How to format a data science cover letter: Additional resourcesIf you need help to format a data science cover letter, you can browse the wide choice of cover letter builders available online. But how do you pick the best out of many? To make your search easier, we’ve made a quick list of the cover letter builders that offer the best features and useful relevant resources. Source: 365 Data Science Blog The post How to Format a Data Science Cover Letter in 2020? appeared first on Data Science PR. Via https://datasciencepr.com/how-to-format-a-data-science-cover-letter-in-2020/?utm_source=rss&utm_medium=rss&utm_campaign=how-to-format-a-data-science-cover-letter-in-2020 |

DataSciencePRData Science PR is the leading global niche data science press release services provider. Archives |

RSS Feed

RSS Feed