|

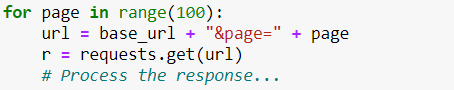

First, let’s consider the matter from an ethical point of view. Your program should be respectful to the site owner. Remember that every time you load a web page, you’re making a request to a server. When you’re just a human with a browser, there’s not much damage you can do. With a Python script, however, you can execute thousands of requests a second, intentionally or unintentionally. The server then needs to process every request individually. This, combined with the normal user traffic, can result in overloading the server. And this overload can manifest in slowing down the website or even bringing it down altogether. Such a situation usually degrades the experience of real users and can cost the website owner valuable customers.Obviously, we don’t want that. In fact, if done intentionally, this is considered a crime – the so-called DDOS attack (Deliberate Denial of Service), so we better avoid it. Given the potential damage this easy technique can do, servers have started employing automatic defense mechanisms against it.One form of such protection against spammers may be to temporarily block a user from the service if they detect a big amount of activity in a short period of time. So, even if you are not sending huge numbers of requests, you may get blocked as a preventive measure. And that’s precisely why it is important to know how to limit your rate of requests. How to Limit Your Rate of Requests When Scraping?Let’s see how to do this in Python. It is actually very easy. Suppose you have a setup with a “for loop” in which you make a request every iteration, like this:

Depending on the other actions you take in the loop, this can iterate extremely fast. So, in order to make it slower, we will simply tell Python to wait a certain amount of time. To achieve this, we are going to use the time library.

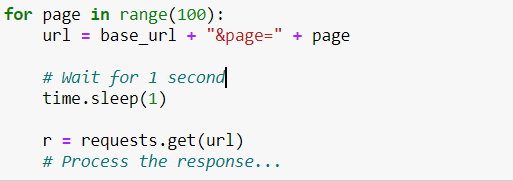

It has a function, called sleep that “sleeps” the program for the specified number of seconds. So, if we want to have at least 1 second between each request, we can have the sleep function in the for loop, like this:

This way, before making a request, Python would always wait 1 second. That’s how we will avoid getting blocked and proceed with scraping the webpage. Source: 365 Data Science Blog The post How to Limit Your Rate of Requests When Web Scraping in 2020? appeared first on Data Science PR. Via https://datasciencepr.com/how-to-limit-your-rate-of-requests-when-web-scraping-in-2020/?utm_source=rss&utm_medium=rss&utm_campaign=how-to-limit-your-rate-of-requests-when-web-scraping-in-2020 |

DataSciencePRData Science PR is the leading global niche data science press release services provider. Archives |

RSS Feed

RSS Feed